Soundscapes for Web Games: The Layer Beneath Your SFX - Part 1

Part 1 of 2. Part 2 is the Howler implementation: a ~150-line engine, a six-function bus adapter, and host bindings for React, Phaser, and three.js.

So, I’ll be honest. I shipped web games for years before I realized why they all sounded a little off to me. The samples were fine. The music was fine. The footsteps were tight, the UI clicks were crunchy in all the right ways. And yet, every time I muted the music and just stood there in the level, it felt like standing in a stage set with the lights on between takes. 😅

It took me a long time to name the thing that was missing.

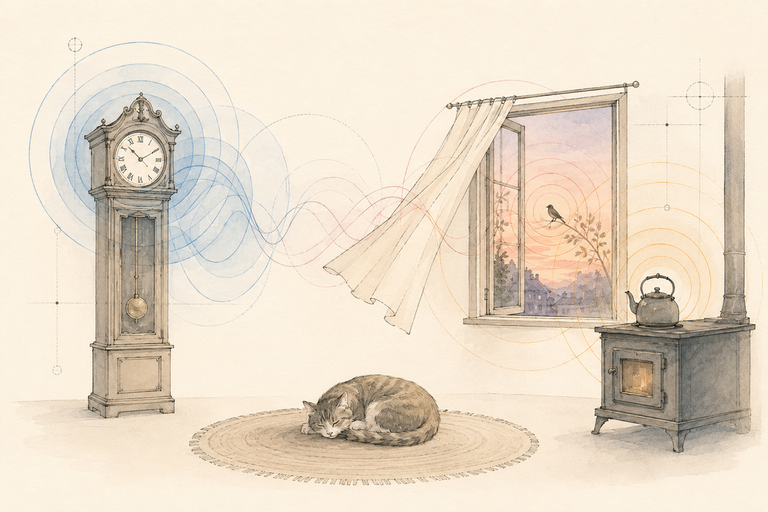

Most web games sound flat, and it isn’t because the samples are bad. It’s because the game treats audio as event SFX. A footstep here, a click there, a music loop in the background. The space between those events is silent, and silence is what makes a room feel empty before anything happens.

The fix is a layer underneath the events. I call it a Soundscape, and it’s what tells your ear “you are somewhere” before a single thing is interacted with.

Layered comparison

Music / Bed / Emitters / SFX

Music

Composed loop, market square cafe

Bed

Restaurant room tone, looping under everything

Emitters

Cash register, espresso, cups, phone, on randomised timers

SFX

Click the strip below to fire a UI sound

Soundscape vs music vs SFX

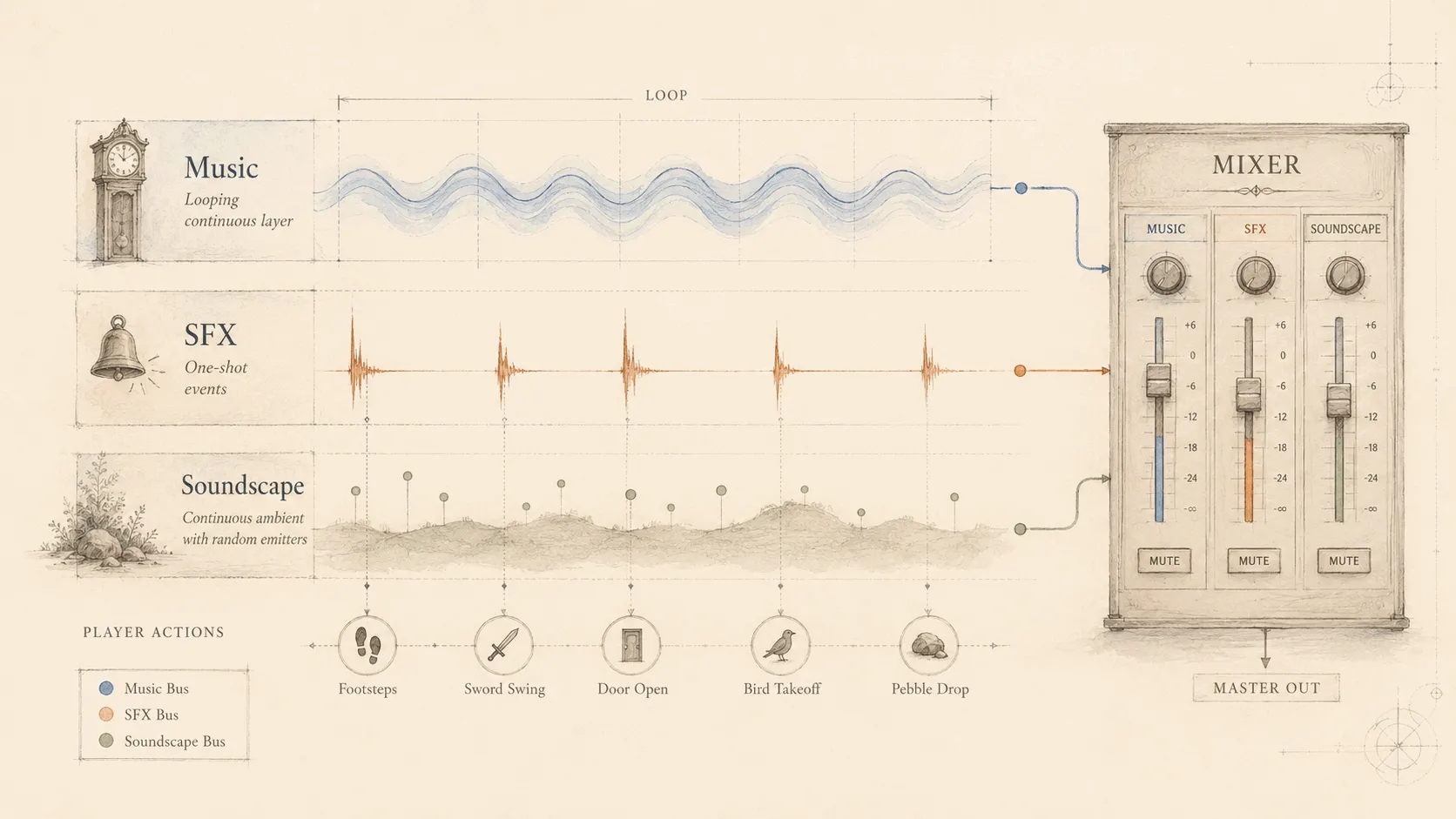

These three get conflated constantly. They are not the same thing, and they really do not want the same treatment from your mixer.

| Layer | What it is | Triggered by | Wants |

|---|---|---|---|

| Music | A composed, looping track | Scene or beat | Its own bus, fade in/out, ducks rarely |

| SFX | One-shot reactions to gameplay | Player or game | Tight latency, no fades |

| Soundscape | Ambient bed plus randomized non-musical emitters | A timer, not you | A bus that ducks under dialog |

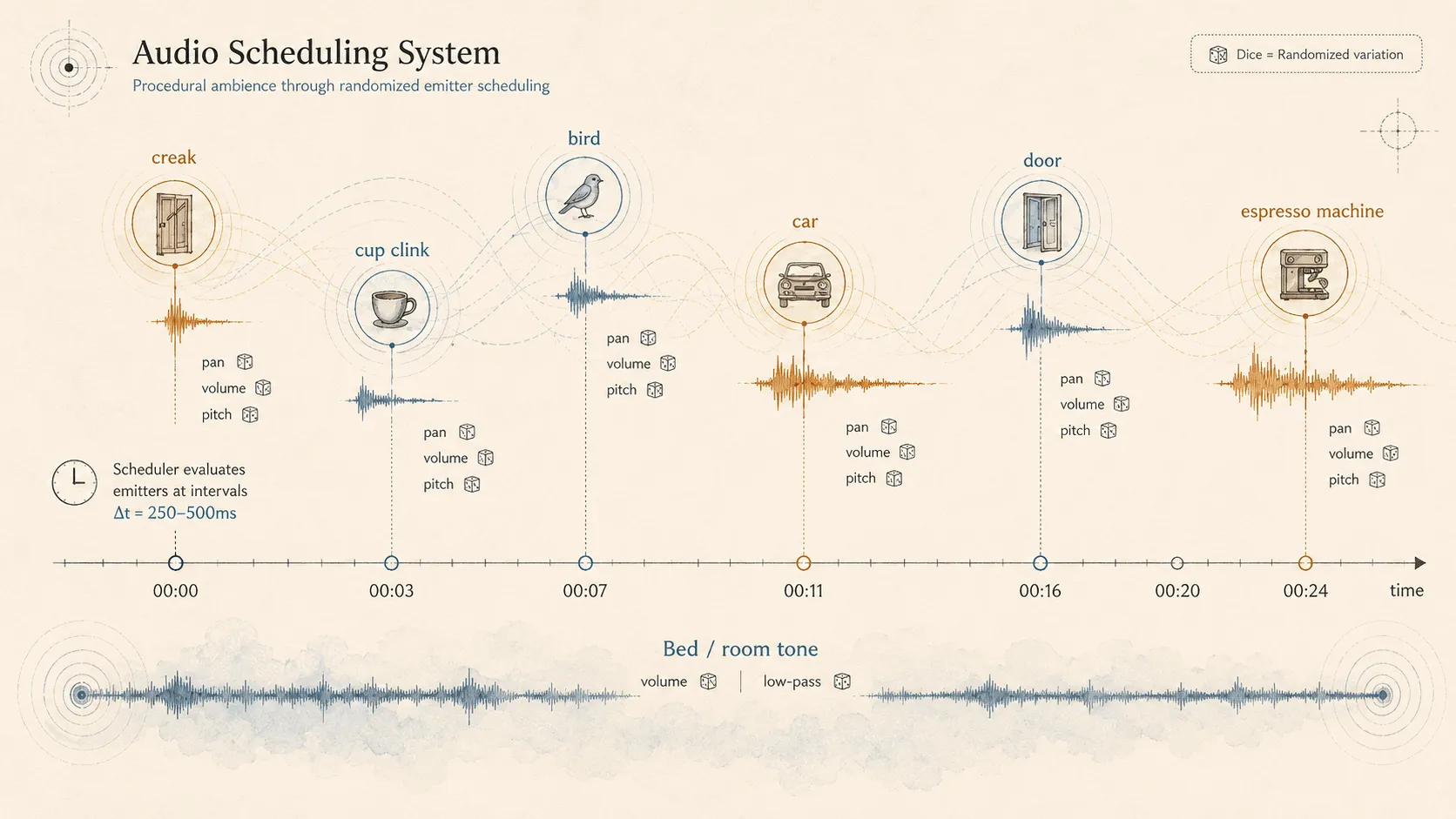

The Soundscape has two parts. The Bed is a looping ambience (room tone, wind, distant traffic). The Emitters are short non-musical samples scheduled on a timer with randomized pan, volume, and pitch (a creaking floorboard, a bird, a far-off door). Together they read as “this place exists.”

That’s the whole idea.

Why it matters more on the web than native

Native engines hand you a spatial mixer, an audio graph, bus routing, and priority systems for free. Unity has AudioMixerGroup. Unreal has Submixes and Sound Cues. You wire the graph in an editor and forget about it.

The web hands you AudioContext 🔗, a GainNode 🔗, and a polite suggestion to figure the rest out yourself. Phaser ships WebAudioSound 🔗. three.js ships PositionalAudio 🔗 (and drei wraps it as <PositionalAudio> 🔗 for r3f). Neither gives you scheduled non-musical ambience. Neither gives you a bus that can duck a category of sounds while a voice-over plays.

Here’s our WebAudioSound class. We’ll give you play(), pause(), and a volume number. Go figure out the rest.

That gap is why most web games never get past “music + SFX.” The work is not hard. Nothing in your engine of choice nudges you toward doing it.

Big Misconception: “ambience is just a quiet music loop”

I used to think this too. Drop a 30-second room-tone MP3 into the music slot, set the volume to 0.2, call it ambience. Done.

Here’s the problem. A static loop is the easiest thing in the world for the ear to recognize. Your brain figures out the seam (the moment the loop restarts) within about three repeats, and once it has the seam, it cannot un-hear it. Worse, a single unchanging loop has the same emotional content as a fridge hum. The room is on, but nobody’s home.

But isn’t a quiet loop better than nothing?? Honestly, yes. A bed by itself is already a step up from silence. The trick is that the bed is the floor, not the ceiling. The thing that makes the room feel alive is what gets scheduled on top of the bed: a floorboard creak at second 14, a distant car at second 22, a bird three pans to the right at second 31. Each one a one-shot, never the same sample twice in a row, never quite at the same volume.

Seam audition

Hear the loop seam

An analogy that helped me. Stand still in a real room for a minute and listen. The “ambience” you hear is not a single texture. It’s a refrigerator compressor cycling on, a neighbor’s door, a creak as the floor settles, a gust against a window. None of those things are looping. They’re events on top of a near-silent bed. A Soundscape system is just that, on a timer.

What “good” looks like in three contexts

The same engine fits all three. What changes is how much you lean on each part.

2D Phaser games. Bed keyed to the scene. Emitters scheduled in scene update or via timers. The listener position rarely moves, so pan is decorative rather than spatial. The win here is variety: three or four samples per emitter with last-picked exclusion turns a static loop into something the ear cannot lock onto. Cheap, huge.

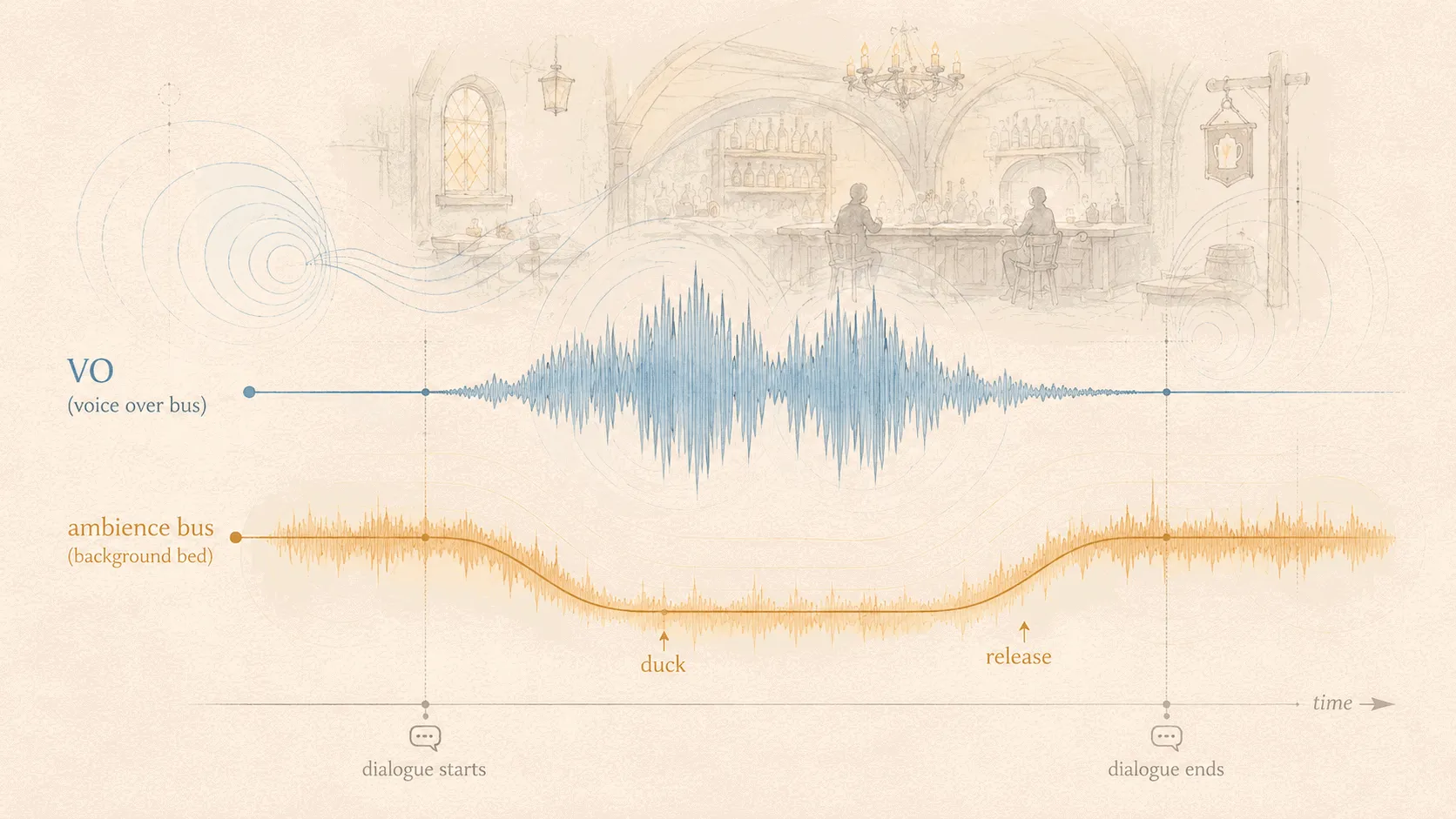

3D three.js or r3f games. The bed is still 2D. A room tone has no position; it is everywhere. Emitters can be either 2D-randomized or true positional via PositionalAudio 🔗 for things that should pan as the camera turns. Ducking starts to matter more here, because dialog and narration get buried under ambience faster in a 3D scene, where you also have music and footsteps and UI all competing.

Narrative scenes. Ducking is the single biggest win. Without it, voice-over fights ambience and you end up cranking the VO bus until ambience is a whisper. With a 250ms duck on the ambience bus, the VO sits naturally, and the room comes back as soon as the line ends.

The room comes back. That’s the goal.

Ducking A/B

Voice-over over BG music (standing in as the bed)

“You finally made it to the tavern.”

The three knobs that buy the most realism

Most of what makes a Soundscape feel alive comes down to three things. Skip any of them and it sounds like a tape loop.

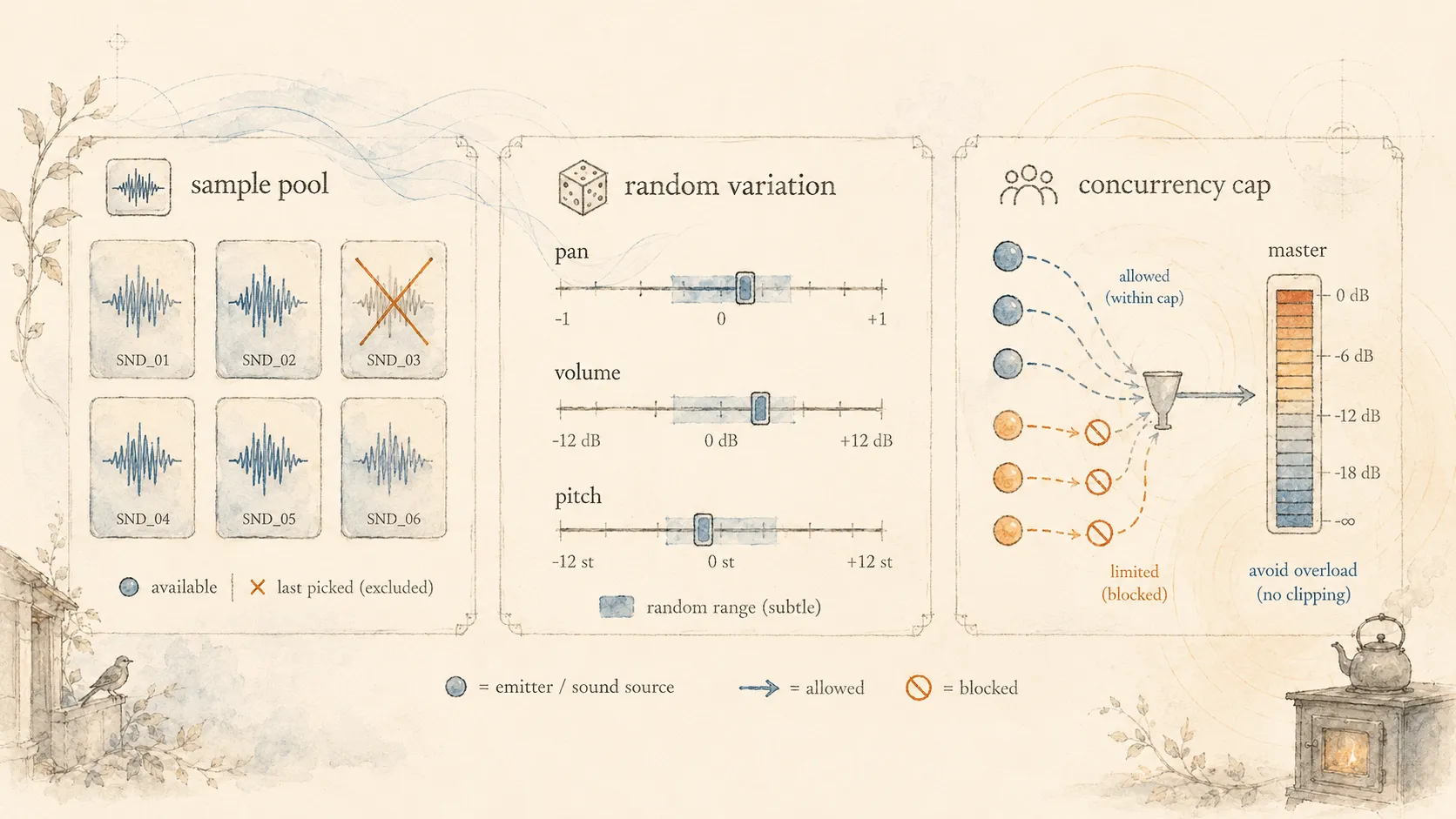

1. A Sample Pool per emitter, with last-picked exclusion. Each emitter (say, “creaking floorboard”) holds three to six short samples. Each fire picks one at random, but never the one it just played. This is the single biggest perceptual upgrade per byte of work. The ear locks onto immediate repeats faster than it locks onto anything else.

2. Random pan, volume, and pitch within tight ranges. Pan ±0.4, volume 0.7 to 1.0, rate 0.95 to 1.05. Tight enough that nothing sounds broken; wide enough that no two fires are identical.

Pitch variance is the easiest one to overdo. Past about ±5%, your samples start sounding chipmunky or sluggish. Ask me how I know.

3. A Concurrency Cap. maxConcurrentEmitterInstances is the cheap insurance policy. A stretch of bad RNG will occasionally fire three emitters within 200ms; without a cap, that stretch craters your mix and clips the master. Cap it at three or four, and the cap will fire maybe once a minute, inaudibly.

Three knobs. That’s it.

Parameter playground

The three knobs, live

Pool

- cups-clanging

- cash-register

- phone-ringing

- espresso-machine

Where’s the catch?

There is no free lunch, so:

- Authoring three to six samples per emitter is more work than wiring up one. The variety budget is real.

- A concurrency cap means an emitter you wanted to hear will occasionally get dropped. Almost always fine; sometimes a tell.

- Ducking adds bus complexity, which adds a place for bugs to hide. If you have one bus today, you’ll have four tomorrow.

- The whole layer is invisible when it works. Nobody on the team will notice. That is the point, but it does make it a tough sell in a sprint review.

Anti-patterns

A short list of things that look like they should work and do not. I have written every one of these at least once.

- Looping the bed at full volume, no fade-in. First time the scene loads, the player hears a hard cut into ambience. A 500ms fade-in costs nothing and removes the seam.

- Hardcoded

<audio>tags. No bus routing, no ducking, no per-instance volume. You cannot duck what you cannot address. Use Web Audio (or a wrapper like Howler) for anything that needs to coexist with other sounds. - One Howl per emitter fire. Constructing a new Howl every time an emitter triggers leaks memory and re-decodes the sample. Construct once at load; trigger

play()per fire. - “Duck by pausing the bed.” Pausing kills the loop’s phase. You get a click on resume. Duck by lowering the bus gain, not by stopping playback.

- Forgetting to dispose timers on scene change. Scene changes are when soundscape bugs go feral. A

setTimeoutarmed in scene A will happily fire an emitter in scene B if you do not clear it. Track every timer id; clear them all on dispose; flip adisposedflag and check it before re-arming inside the recursion.

Track your timers. Always has been.

What’s next

Part 2 walks through a pluggable implementation: one engine-agnostic class, a six-function bus adapter contract, and three host bindings (React, Phaser, imperative three.js). The engine is about 150 lines. What varies between projects is the bus underneath it and the lifecycle binding above it.

Both are smaller than you would guess. If you’ve ever stood in one of your own levels and felt like the lights were on between takes, I think you’re going to enjoy the fix.

Stay in touch

Don't miss out on new posts or project updates. Hit me up on X for updates, queries, or some good ol' tech talk.

Follow @zkMake